SLM efficiency, small size, and versatility are revolutionizing the field of artificial intelligence. Large Language Models (LLMs) such as Google’s PaLM and Open AI’s GPT-4 dominate debates due to their enormous data processing capacity, but SLMs are becoming more and more well-liked since they provide strong performance with less computing power. The description of SLMs, their main benefits, drawbacks, and uses are all covered in this blog, along with how companies may use them to their advantage in the cutthroat market of today.

Compared to Large Language Models (LLMs), Small Language Models (SLMs) are a family of AI language models that are intended to be smaller and need fewer resources. They are perfect for settings with constrained computing resources since they are designed to efficiently handle natural language processing (NLP) problems with fewer parameters.

SLMs may handle a variety of NLP jobs with less power and memory use than LLMs, which frequently need large datasets and complex gear. MobileViT, TinyBERT, MiniLM, and DistilBERT are a few examples of SLMs.

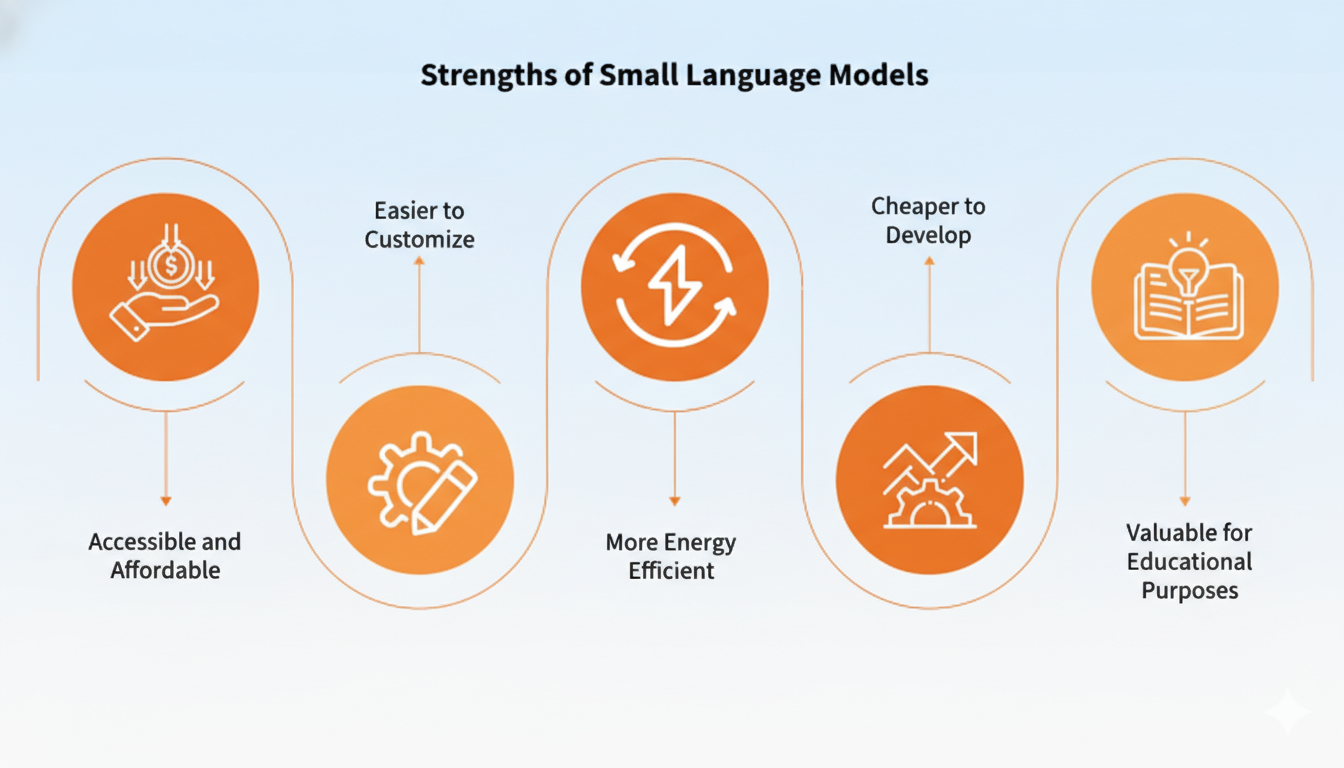

##### 1\. Efficiency and Speed

SLMs use less resources and are performance-optimized. They are appropriate for real-time applications like chatbots for customer support and virtual assistants because of their reduced size, which enables quicker inference times.

##### 2\. Cost-Effective Deployment

SLMs may be implemented on common hardware without the requirement for costly GPUs or cloud infrastructure because of their lower complexity. They are therefore perfect for startups and small enterprises with little funding.

##### 3\. Lower Power Consumption

Due to their energy efficiency, SLMs may be used on edge devices where power consumption is crucial, such smartphones, Internet of Things devices, and embedded systems.

##### 4\. Faster Training and Fine-Tuning

SLMs require less data for training and fine-tuning since they have fewer parameters, which cuts down on the time and expense of developing the model.

##### 5\. Easier Integration

There is no need for significant architectural modifications when integrating SLMs into current software systems and applications.

While SLMs offer many benefits, they are not without limitations:

1\. Reduced Complexity Handling: SLMs may struggle with highly complex tasks that require deep contextual understanding compared to larger models. 2\. Limited Generalization: Their smaller parameter size can limit their ability to generalize across diverse datasets. 3\. Lower Accuracy on Large Datasets: For tasks requiring extensive data coverage, SLMs may fall short compared to larger models.

SLMs are becoming increasingly popular in a wide range of industries due to their versatility and efficiency. Some common use cases include:

###### 1\. Chatbots and Virtual Assistants

Customer support chatbots, virtual assistants, and helpdesk systems are powered by SLMs, which offer dependable and quick replies without the need for cloud-based heavy models.

###### 2\. Edge AI Applications

Customer support chatbots, virtual assistants, and helpdesk systems are powered by SLMs, which offer dependable and quick replies without the need for cloud-based heavy models.

##### 3\. Healthcare

SLMs are employed in the healthcare industry for jobs where speed and data protection are important, such as summarizing medical records, analyzing patient comments, and evaluating symptoms.

##### 4\. E-Learning Tools

When speed and data protection are important, SLMs are utilized in the healthcare industry for activities including symptom assessment, patient feedback analysis, and medical record summarizing.

##### 5\. Finance and Banking

SLMs help in summarizing financial documents, automating customer service, and detecting fraud.

##### 6\. Retail and E-Commerce

SLMs help in personalizing recommendations, managing customer inquiries, and automating inventory management.

#### Training Techniques for SLMs

Several methods have been developed to ensure SLMs remain efficient without compromising performance:

##### 1\. Knowledge Distillation:

A smaller model learns from a larger pre-trained model, retaining essential knowledge while reducing size.

##### 2\. Pruning:

Irrelevant parameters are removed from the model to reduce its size while maintaining accuracy.

##### 3\. Quantization:

Reduces the precision of model weights, minimizing memory usage and improving speed.

- 1\. Faster Time to Market: SLMs require less training time, enabling businesses to deploy AI solutions quickly.

- 2\. Budget-Friendly: Perfect for startups and SMEs with limited AI budgets.

- 3\. Scalability: Easily integrated into various applications across industries.

- 4\. Improved Data Privacy: On-device processing minimizes data transmission risks.

The need for small, effective, and easily accessible models like SLMs will increase as AI develops more. They are a useful tool for developers and organizations alike since they provide the ideal balance between performance and resource efficiency.

Businesses may stay ahead of the curve in the AI space by investing in SLMs, which frees them from the burden of expensive infrastructure. SLMs provide a workable and future-proof solution, whether you’re creating an app or incorporating AI into your corporate operations.

By offering a more affordable, effective, and scalable substitute for bigger models, small language models, or SLMs, are revolutionizing the field of artificial intelligence. SLMs are poised to emerge as the go-to option for several AI-driven solutions because of their quicker processing speeds, reduced resource requirements, and flexible applications. SLMs will become even more important as the need for edge AI and real-time processing increases.

_Are you ready to explore how SLMs can transform your business?_

_Start today and stay ahead in the world of AI innovation!_

FAQ

What is a Small Language Model (SLM)?

An SLM is a compact NLP model with fewer parameters than LLMs, optimized for lower compute, faster inference, and deployment on resource-constrained devices.

How do SLMs compare to Large Language Models (LLMs)?

SLMs trade some accuracy and generalization for much lower compute, cost, and latency, making them better suited for on-device and real-time applications.

What are common use cases for SLMs?

Typical uses include chatbots, on-device assistants, edge analytics, healthcare summarization, e-learning, and lightweight document summarization.

How can businesses deploy SLMs cost-effectively?

Use techniques like knowledge distillation, pruning, and quantization, deploy on standard hardware or edge devices, and fine-tune on task-specific datasets to reduce costs.