AI systems based on large language models (LLMs) are increasingly used as agents that interact with users and the world. To do this successfully, LLMs need to construct internal representations of the world and estimate the probability that each of these representations is accurate. Take personalized recommendations, for example: the LLM needs to gradually infer the user’s preferences from their choices over the course of multiple interactions.

Bayesian inference defines the optimal way to perform such updates. By implementing this strategy, LLMs could optimize user interactions by updating their estimates of the user’s preferences as new info about the user arrives. But without specific training, LLMs often default to simple heuristics — like assuming everyone wants the cheapest option — instead of inferring a specific user's unique preferences.

In “Bayesian teaching enables probabilistic reasoning in large language models”, we teach the LLMs to reason in a Bayesian manner by training them to mimic the predictions of the Bayesian model, which defines the optimal way to reason about probabilities. We find that this approach not only significantly improves the LLM’s performance on the particular recommendation task on which it is trained, but also enables generalization to other tasks. This suggests that this method teaches the LLM to better approximate Bayesian reasoning. More generally, our results indicate that LLMs can effectively learn reasoning skills from examples and generalize those skills to new domains.

As with humans, to be effective, an LLM’s user interactions require continual updates to its probabilistic estimates of the user’s preferences based on each new interaction with them. Here we ask: do LLMs act as if they have probabilistic estimates that are updated as expected from optimal Bayesian inference? To the extent that the LLM’s behavior deviates from the optimal Bayesian strategy, how can we minimize these deviations?

To test this, we used a simplified flight recommendation task, in which the LLMs interact as assistants with a simulated user for five rounds. In each round, three flight options were presented to both the user and the assistant. Each flight was defined by:

- A departure time

- A duration

- A number of stops

- A cost

Each simulated user was characterized by a set of preferences: for each feature, they could have a strong or weak preference for high or low values, or no preference regarding the feature.

For example:

- Prefer shorter flights

- Prefer cheaper tickets

- Prefer fewer stops

Comparing with a Bayesian Assistant§

We compared the LLMs’ behavior to that of a model called the Bayesian Assistant, which follows the optimal Bayesian strategy.

This model:

- Maintains a probability distribution reflecting its estimates of the user’s preferences

- Uses Bayes’ rule to update this distribution as new information about the user’s choices becomes available

Unlike many real-world scenarios where Bayesian reasoning is difficult to implement computationally, this controlled setting allowed us to precisely measure how far LLM behavior deviates from optimal Bayesian reasoning.

The goal of the assistant was simple:

Recommend the flight that matches the user’s choice.

At the end of each round:

- The user indicated whether the assistant chose correctly

- The correct answer was provided

Bayesian belief updates§

A Bayesian assistant updates its estimates about the user’s preferences after observing evidence (the user's choice) from each round.

Crucially:

The assistant cannot directly access the user’s preferences, making this a challenging probabilistic reasoning task.

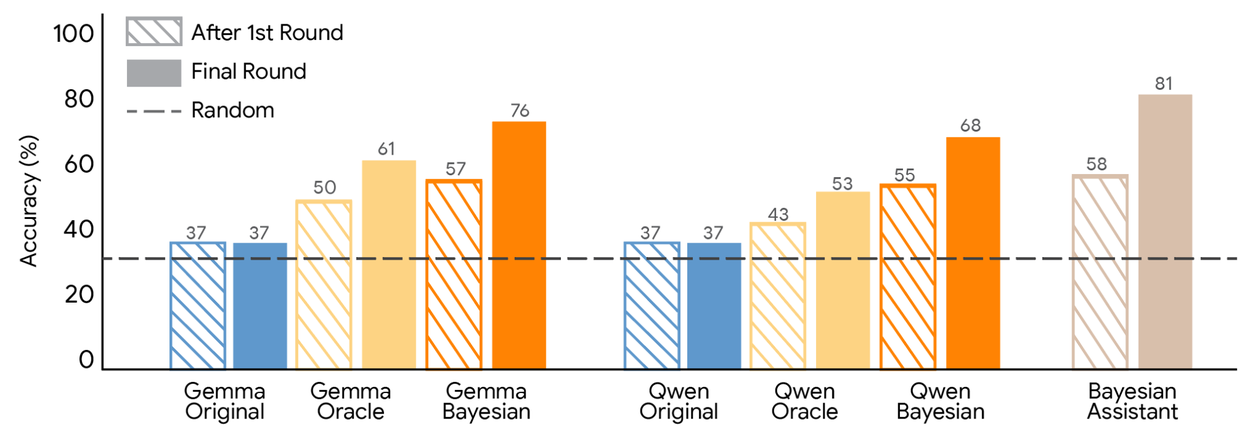

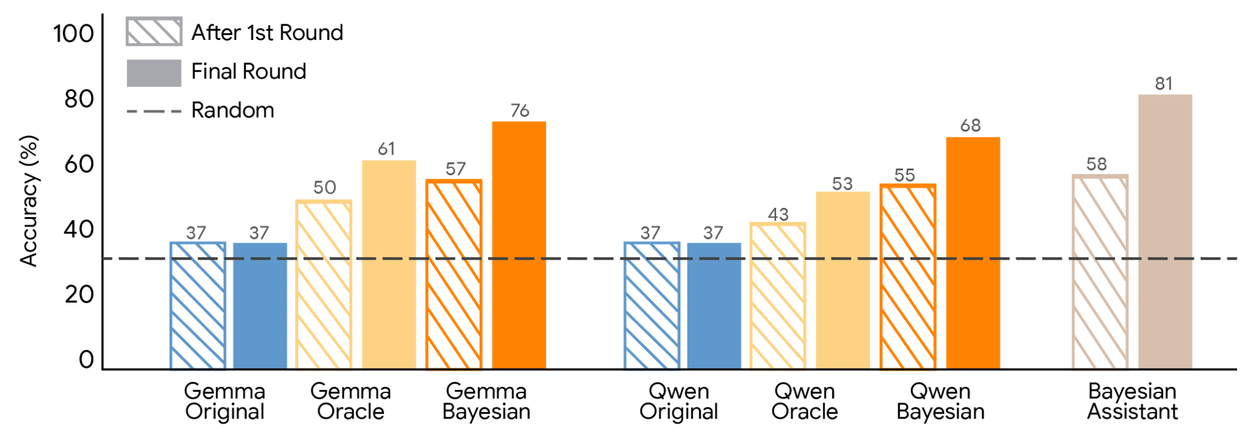

Results: LLMs vs Bayesian Assistant§

We evaluated multiple LLM families and compared them to:

- Human participants

- The Bayesian Assistant

Key findings:

- LLMs performed significantly worse than the Bayesian Assistant.

- The Bayesian Assistant gradually improved recommendations with each interaction.

- Many LLMs plateaued after a single interaction, showing limited ability to adapt to new information.

Humans performed better than most LLMs but still fell short of the optimal Bayesian strategy.

Accuracy comparison§

| Model | Accuracy |

|---|---|

| Bayesian Assistant | 81% |

| Humans | Lower than optimal |

| LLMs | Significantly lower |

The study evaluated recommendation accuracy after:

- Round 1

- Round 5

Across three interaction sets with 624 users.

In the Bayesian framework, an agent maintains a prior belief about the state of the world.

For an LLM, this “world state” represents its internal knowledge about:

- Facts

- Relationships

- Concepts

When new information appears, the model must update:

Prior belief → Posterior belief

Where:

- Prior = initial probability before new evidence

- Posterior = updated probability after incorporating evidence

This process repeats continuously as more information arrives.

Teaching LLMs probabilistic updates§

To teach models how to perform Bayesian reasoning, researchers used supervised fine-tuning.

The LLM updated its parameters after observing a large number of interactions between users and assistants.

Two strategies were used to generate training data.

In Oracle teaching, the LLM observed interactions between:

- A simulated user

- An oracle assistant

The oracle assistant had perfect knowledge of the user’s preferences and always recommended the exact choice the user would make.

In Bayesian teaching, the LLM observed interactions between:

- The simulated user

- The Bayesian Assistant

Unlike the oracle, the Bayesian assistant sometimes made incorrect predictions, especially during early rounds when uncertainty was high.

Researchers hypothesized that mimicking the Bayesian Assistant would help LLMs:

- Maintain uncertainty

- Update beliefs more realistically

This method is similar to knowledge distillation, where one system learns by imitating another model.

Fine-tuning improves probabilistic reasoning§

Both strategies significantly improved model performance.

However:

Bayesian teaching consistently outperformed Oracle teaching.

Models trained with Bayesian teaching:

- Agreed more often with the Bayesian Assistant

- Demonstrated stronger probabilistic reasoning

Cross-domain generalization§

Researchers also tested models on new domains not seen during training, including:

- Web shopping

- Hotel recommendations

Bayesian-trained models successfully transferred their reasoning abilities.

The green dashed line in the study represented the performance of models trained directly on web shopping data.

Even without direct training in this domain, Bayesian-trained models performed competitively.

Agreement with Bayesian reasoning§

Fine-tuned models showed a higher agreement rate with the Bayesian Assistant.

Bayesian versions of each model achieved the highest agreement and accuracy.

Bayesian teaching enabled models to:

- Agree with mathematical ideals about 80% of the time

- Become more sensitive to new information

- Weigh user choices based on how informative they are

Most importantly:

The learned reasoning skills were not task-specific.

Models trained on synthetic flight data successfully applied the same reasoning principles to:

- Web shopping

- Hotel recommendations

- Other recommendation tasks

This demonstrates that LLMs can internalize general probabilistic reasoning strategies.

The experiments showed that standard LLMs struggle to:

- Form probabilistic beliefs

- Update those beliefs through interaction

However, additional training using demonstrations from a Bayesian Assistant dramatically improved their reasoning capabilities.

This highlights the strength of the LLM post-training paradigm.

By showing models examples of optimal strategies, researchers can significantly improve their reasoning abilities.

By training LLMs to imitate a Bayesian reasoning system, researchers were able to transform models from:

Static pattern matchers → Adaptive reasoning agents

These models learned to:

- Maintain uncertainty

- Update beliefs

- Apply reasoning across different domains

This work demonstrates the potential of combining symbolic reasoning frameworks with neural networks, enabling more intelligent and adaptive AI systems in the future.

Key takeaways

- Training LLMs to imitate a Bayesian Assistant teaches them to maintain uncertainty and perform principled probabilistic updates.

- Bayesian teaching outperforms oracle-style demonstrations because it preserves informative uncertainty during learning.

- Fine-tuned models generalized reasoning skills across domains like shopping and hotel recommendations, not just the training task.

- LLMs initially underperform human and Bayesian baselines but can approach optimal strategies after targeted post-training.

- Combining symbolic Bayesian ideals with neural fine-tuning yields more adaptive, recommendation-capable agents.

FAQ

What is Bayesian teaching for LLMs?

Bayesian teaching is a fine-tuning method where LLMs learn to mimic an optimal Bayesian model’s predictions, teaching them to maintain and update probabilistic beliefs from observations.

How does Bayesian teaching differ from oracle teaching?

Oracle teaching shows perfect choices (no uncertainty), while Bayesian teaching exposes the model to the Bayesian Assistant's uncertain, probabilistic predictions, which better teaches belief-updating and generalization.

Do Bayesian-trained models generalize beyond the training task?

Yes — models trained on synthetic flight data transferred probabilistic reasoning to web shopping, hotel recommendations, and other domains without direct training on those tasks.

How much does Bayesian teaching improve performance?

Bayesian-trained models show substantially higher agreement with the Bayesian Assistant and can reach about 80% agreement, outperforming baseline LLMs and oracle-trained models on recommendation accuracy.