Google DeepMind Exposes Critical RAG Flaw: Why Embeddings Fail at Scale

RAG (Retrieval-Augmented Generation) systems typically use dense embedding models to transform both queries and documents into vectors of fixed dimensions. This technique has dominated AI applications for good reason, yet Google DeepMind's latest research exposes a fundamental design limitation that persists regardless of model size or training quality.

Embedding Dimension Limits: The Numbers That Matter

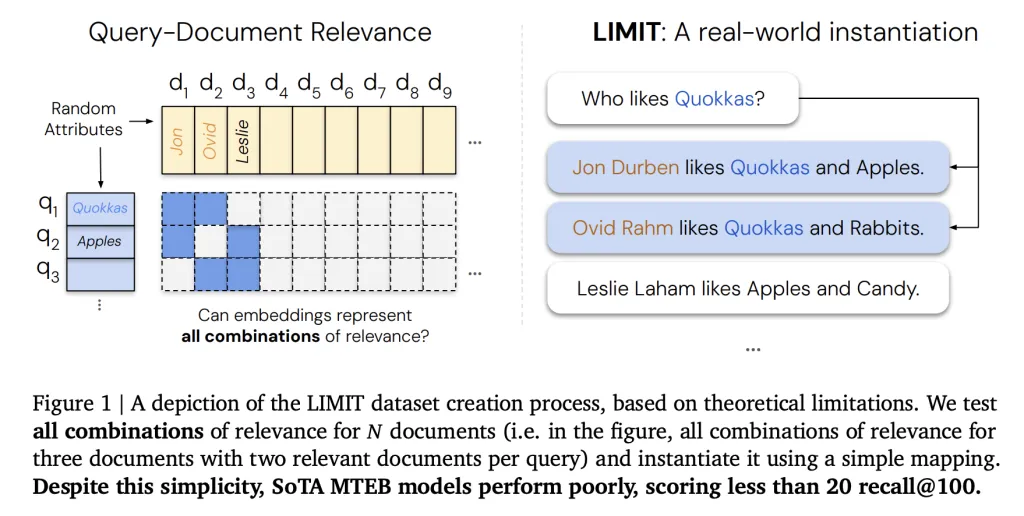

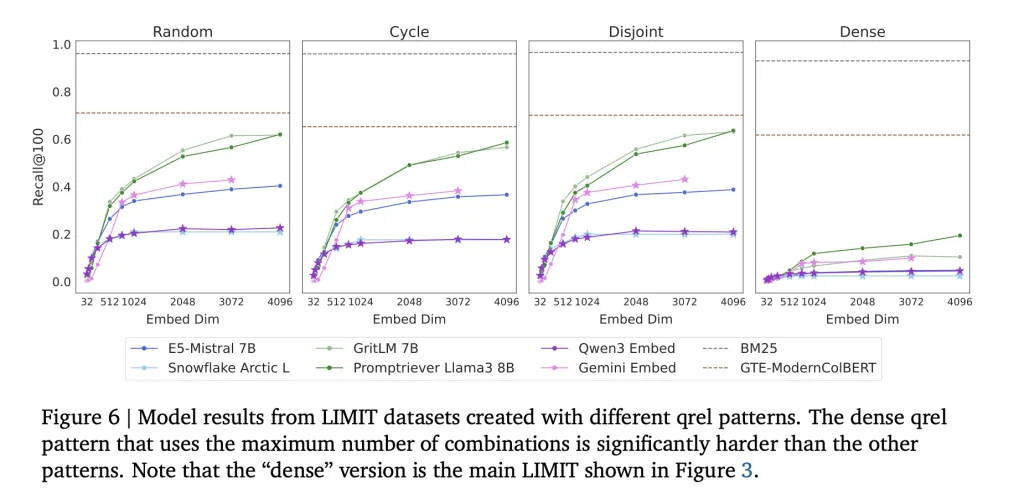

At the core of the issue is the representational capacity of fixed-size embeddings. An embedding of dimension d cannot represent all possible combinations of relevant documents once the database grows beyond a critical size. This follows from results in communication complexity and sign-rank theory.

- For embeddings of size 512, retrieval breaks down around 500K documents.

- For 1024 dimensions, the limit extends to about 4 million documents.

- For 4096 dimensions, the theoretical ceiling is 250 million documents.

These values are best-case estimates derived under free embedding optimization, where vectors are directly optimized against test labels. Real-world language-constrained embeddings fail even earlier.

The Benchmark That Broke Modern AI Systems

These values are best-case estimates derived under _free embedding optimization_, where vectors are directly optimized against test labels. Real-world language-constrained embeddings fail even earlier.

**How Does the LIMIT Benchmark Expose This Problem?§

**

- LIMIT full (50K documents): In this large-scale setup, even strong embedders collapse, with recall@100 often falling below 20%.

- LIMIT small (46 documents): Despite the simplicity of this toy-sized setup, models still fail to solve the task. Performance varies widely but remains far from reliable:

- Promptriever Llama3 8B: 54.3% recall@2 (4096d) - GritLM 7B: 38.4% recall@2 (4096d) - E5-Mistral 7B: 29.5% recall@2 (4096d) - Gemini Embed: 33.7% recall@2 (3072d)

Even with just 46 documents, no embedder reaches full recall, highlighting that the limitation is not dataset size alone but the single-vector embedding architecture itself.

In contrast, BM25, a classical sparse lexical model, does not suffer from this ceiling. Sparse models operate in effectively unbounded dimensional spaces, allowing them to capture combinations that dense embeddings cannot.

Why Does This Matter for RAG?§

CCurrent RAG implementations typically assume that embeddings can scale indefinitely with more data. The Google DeepMind research team explains how this assumption is incorrect: embedding size inherently constrains retrieval capacity. This affects:

- Enterprise search engines handling millions of documents.

- Agentic systems that rely on complex logical queries.

- Instruction-following retrieval tasks, where queries define relevance dynamically.

Even advanced benchmarks like MTEB fail to capture these limitations because they test only a narrow part/section of query-document combinations.

What Are the Alternatives to Single-Vector Embeddings?§

The research team suggested that scalable retrieval will require moving beyond single-vector embeddings:

- Cross-Encoders: Achieve perfect recall on LIMIT by directly scoring query-document pairs, but at the cost of high inference latency.

- Multi-Vector Models (e.g., ColBERT): Offer more expressive retrieval by assigning multiple vectors per sequence, improving performance on LIMIT tasks.

- Sparse Models (BM25, TF-IDF, neural sparse retrievers): Scale better in high-dimensional search but lack semantic generalization.

The key insight is that architectural innovation is required, not simply larger embedders.

What is the Key Takeaway?§

The research team’s analysis shows that dense embeddings, despite their success, are bound by a mathematical limit: they cannot capture all possible relevance combinations once corpus sizes exceed limits tied to embedding dimensionality. The LIMIT benchmark demonstrates this failure concretely:

- On LIMIT full (50K docs): recall@100 drops below 20%.

- On LIMIT small (46 docs): even the best models max out at ~54% recall@2.

Classical techniques like BM25, or newer architectures such as multi-vector retrievers and cross-encoders, remain essential for building reliable retrieval engines at scale.

Key takeaways

- Dense single-vector embeddings have a provable capacity ceiling tied to embedding dimension, causing retrieval failures at scale.

- Empirical benchmarks (LIMIT) show even small setups can expose embedding collapse—recall remains far from perfect.

- Sparse methods (BM25) and multi-vector or cross-encoder architectures avoid the single-vector ceiling and should be considered for large corpora.

- Solving RAG at scale requires architectural innovation rather than just larger embedding models.

FAQ

What fundamental flaw did Google DeepMind find in RAG embeddings?

They showed that fixed-size dense embeddings have a mathematical capacity limit: once a corpus exceeds sizes tied to the embedding dimension, embeddings cannot represent all relevance combinations reliably.

At what corpus sizes do embeddings typically break down?

Best-case estimates: 512-d embeddings around 500K docs, 1024-d around 4M docs, and 4096-d near 250M docs, with practical language-constrained embeddings failing earlier.

Do sparse models like BM25 avoid this limitation?

Yes—sparse lexical methods operate in effectively unbounded dimensional spaces and don't face the same combinatorial ceiling, though they trade off semantic generalization.

What practical alternatives exist to single-vector embeddings?

Options include cross-encoders (accurate but slow), multi-vector models like ColBERT (more expressive per document), and sparse or neural sparse retrievers, each with tradeoffs in latency and scalability.