I Gave the Same Prompt to Every Image AI. Here’s What Actually Won§

I decided to keep things fair.

Same prompt. Same expectations. No tweaks.

I ran one identical prompt across some of the most talked-about image generation models right now and compared the results side by side.

Here’s the lineup:

Nano Banana§

Nano Banana Pro§

Definable AI Photo Studio§

DALL·E 3§

Grok 2 Image (xAI)§

Google Image Gen 4.0§

GPT Image 1§

#### No filters. No bias. Just output vs output.

The Results§

The difference was obvious.

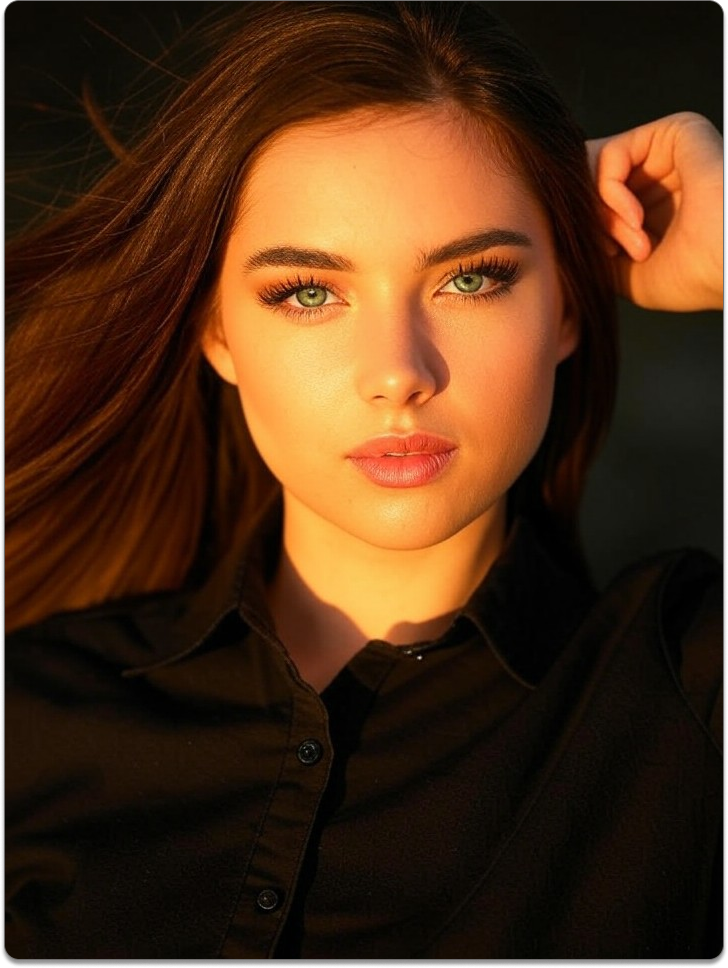

Most models delivered decent images — some had good composition, some nailed lighting, some felt creative but inconsistent.

But two stood out immediately.

Nano Banana Pro and Definable AI Photo Studio.

They didn’t just generate images — they understood the prompt.

Details were sharper. Subjects made sense. The vibe actually matched what was asked instead of guessing.

Why Definable AI Photo Studio Hit Different§

Definable AI Photo Studio felt intentional.

• Cleaner visuals • Better realism and balance • Fewer weird artifacts • Strong sense of style

It looked less like “AI trying” and more like “AI delivering.”

Nano Banana Pro Deserves the Hype§

Nano Banana Pro came in strong.

It handled complexity better than most and stayed consistent with the prompt without drifting.

If you care about control and clarity, it showed up.

Final Take§

After testing everything with the exact same prompt, the winners were clear:

🏆 Definable AI Photo Studio 🏆 Nano Banana Pro

The rest weren’t bad — but these two were on another level.

Less randomness. More intent.

And that’s the difference that actually matters.

Key takeaways

- Running one identical prompt across multiple models highlights which AIs truly understand intent versus guessing.

- Definable AI Photo Studio and Nano Banana Pro produced the sharpest, most realistic, and least artifact-prone results in this test.

- Other models showed strengths in composition or creativity but were more inconsistent and produced more artifacts.

- Less randomness and clearer prompt interpretation lead to more usable, production-ready AI images.

FAQ

What made Definable AI Photo Studio stand out?

Definable AI Photo Studio delivered cleaner visuals, fewer artifacts, better realism, and a stronger match to the prompt, making outputs feel intentional rather than guesswork.

Is Nano Banana Pro better for complex prompts?

Yes — Nano Banana Pro handled complexity and maintained consistency, showing strong control and clarity when prompts required detailed interpretation.

Can other image models match these results with prompt tweaks?

Sometimes — many models improve with prompt engineering, parameter tuning, or multiple iterations, but in this identical-prompt test those two models performed best out of the box.

How can I reproduce this comparison?

Use the exact same prompt, disable extra filters or auto-enhancements, fix the seed if available, and compare outputs side-by-side to evaluate composition, artifacts, and realism.

Are there licensing or commercial-use differences between these models?

Yes — each provider has its own licensing and usage terms, so check the model's commercial and copyright policies before using images in paid or public projects.